Image-to-Image Translation with Conditional Adversarial Networks(CVPR2017))

Pix2Pix 코드 실습

- 작성자: 이인엽(dldlsduq94@korea.ac.kr)

- 논문제목: Image-to-Image Translation with Conditional Adversarial Networks(CVPR2017)

- 대표적인 이미지간 도메인 변환 기술인 pix2pix 모델을 학습시켜보자

- 학습 데이터셋: Facades(3X256X256)

트레이닝 데이터셋 불러오기

- 학습을 위해 Facades 데이터셋 load

- 데이터셋 크기는 매우 작지만, 좋은 결과 얻을 수 있음

!git clone https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

Cloning into 'pytorch-CycleGAN-and-pix2pix'...

remote: Enumerating objects: 2447, done.[K

remote: Total 2447 (delta 0), reused 0 (delta 0), pack-reused 2447[K

Receiving objects: 100% (2447/2447), 8.18 MiB | 8.58 MiB/s, done.

Resolving deltas: 100% (1535/1535), done.

import os

os.chdir('pytorch-CycleGAN-and-pix2pix/')

!pip install -r requirements.txt

Requirement already satisfied: torch>=1.4.0 in /usr/local/lib/python3.7/dist-packages (from -r requirements.txt (line 1)) (1.10.0+cu111)

Requirement already satisfied: torchvision>=0.5.0 in /usr/local/lib/python3.7/dist-packages (from -r requirements.txt (line 2)) (0.11.1+cu111)

Collecting dominate>=2.4.0

Downloading dominate-2.6.0-py2.py3-none-any.whl (29 kB)

Collecting visdom>=0.1.8.8

Downloading visdom-0.1.8.9.tar.gz (676 kB)

[K |████████████████████████████████| 676 kB 7.9 MB/s

[?25hCollecting wandb

Downloading wandb-0.12.11-py2.py3-none-any.whl (1.7 MB)

[K |████████████████████████████████| 1.7 MB 36.2 MB/s

[?25hRequirement already satisfied: typing-extensions in /usr/local/lib/python3.7/dist-packages (from torch>=1.4.0->-r requirements.txt (line 1)) (3.10.0.2)

Requirement already satisfied: numpy in /usr/local/lib/python3.7/dist-packages (from torchvision>=0.5.0->-r requirements.txt (line 2)) (1.21.5)

Requirement already satisfied: pillow!=8.3.0,>=5.3.0 in /usr/local/lib/python3.7/dist-packages (from torchvision>=0.5.0->-r requirements.txt (line 2)) (7.1.2)

Requirement already satisfied: scipy in /usr/local/lib/python3.7/dist-packages (from visdom>=0.1.8.8->-r requirements.txt (line 4)) (1.4.1)

Requirement already satisfied: requests in /usr/local/lib/python3.7/dist-packages (from visdom>=0.1.8.8->-r requirements.txt (line 4)) (2.23.0)

Requirement already satisfied: tornado in /usr/local/lib/python3.7/dist-packages (from visdom>=0.1.8.8->-r requirements.txt (line 4)) (5.1.1)

Requirement already satisfied: pyzmq in /usr/local/lib/python3.7/dist-packages (from visdom>=0.1.8.8->-r requirements.txt (line 4)) (22.3.0)

Requirement already satisfied: six in /usr/local/lib/python3.7/dist-packages (from visdom>=0.1.8.8->-r requirements.txt (line 4)) (1.15.0)

Collecting jsonpatch

Downloading jsonpatch-1.32-py2.py3-none-any.whl (12 kB)

Collecting torchfile

Downloading torchfile-0.1.0.tar.gz (5.2 kB)

Collecting websocket-client

Downloading websocket_client-1.3.1-py3-none-any.whl (54 kB)

[K |████████████████████████████████| 54 kB 2.4 MB/s

[?25hCollecting sentry-sdk>=1.0.0

Downloading sentry_sdk-1.5.8-py2.py3-none-any.whl (144 kB)

[K |████████████████████████████████| 144 kB 46.0 MB/s

[?25hRequirement already satisfied: Click!=8.0.0,>=7.0 in /usr/local/lib/python3.7/dist-packages (from wandb->-r requirements.txt (line 5)) (7.1.2)

Requirement already satisfied: PyYAML in /usr/local/lib/python3.7/dist-packages (from wandb->-r requirements.txt (line 5)) (3.13)

Requirement already satisfied: python-dateutil>=2.6.1 in /usr/local/lib/python3.7/dist-packages (from wandb->-r requirements.txt (line 5)) (2.8.2)

Requirement already satisfied: psutil>=5.0.0 in /usr/local/lib/python3.7/dist-packages (from wandb->-r requirements.txt (line 5)) (5.4.8)

Collecting setproctitle

Downloading setproctitle-1.2.2-cp37-cp37m-manylinux1_x86_64.whl (36 kB)

Collecting shortuuid>=0.5.0

Downloading shortuuid-1.0.8-py3-none-any.whl (9.5 kB)

Requirement already satisfied: promise<3,>=2.0 in /usr/local/lib/python3.7/dist-packages (from wandb->-r requirements.txt (line 5)) (2.3)

Collecting docker-pycreds>=0.4.0

Downloading docker_pycreds-0.4.0-py2.py3-none-any.whl (9.0 kB)

Requirement already satisfied: protobuf>=3.12.0 in /usr/local/lib/python3.7/dist-packages (from wandb->-r requirements.txt (line 5)) (3.17.3)

Collecting pathtools

Downloading pathtools-0.1.2.tar.gz (11 kB)

Collecting GitPython>=1.0.0

Downloading GitPython-3.1.27-py3-none-any.whl (181 kB)

[K |████████████████████████████████| 181 kB 43.2 MB/s

[?25hCollecting yaspin>=1.0.0

Downloading yaspin-2.1.0-py3-none-any.whl (18 kB)

Collecting gitdb<5,>=4.0.1

Downloading gitdb-4.0.9-py3-none-any.whl (63 kB)

[K |████████████████████████████████| 63 kB 1.8 MB/s

[?25hCollecting smmap<6,>=3.0.1

Downloading smmap-5.0.0-py3-none-any.whl (24 kB)

Requirement already satisfied: chardet<4,>=3.0.2 in /usr/local/lib/python3.7/dist-packages (from requests->visdom>=0.1.8.8->-r requirements.txt (line 4)) (3.0.4)

Requirement already satisfied: certifi>=2017.4.17 in /usr/local/lib/python3.7/dist-packages (from requests->visdom>=0.1.8.8->-r requirements.txt (line 4)) (2021.10.8)

Requirement already satisfied: urllib3!=1.25.0,!=1.25.1,<1.26,>=1.21.1 in /usr/local/lib/python3.7/dist-packages (from requests->visdom>=0.1.8.8->-r requirements.txt (line 4)) (1.24.3)

Requirement already satisfied: idna<3,>=2.5 in /usr/local/lib/python3.7/dist-packages (from requests->visdom>=0.1.8.8->-r requirements.txt (line 4)) (2.10)

Requirement already satisfied: termcolor<2.0.0,>=1.1.0 in /usr/local/lib/python3.7/dist-packages (from yaspin>=1.0.0->wandb->-r requirements.txt (line 5)) (1.1.0)

Collecting jsonpointer>=1.9

Downloading jsonpointer-2.2-py2.py3-none-any.whl (7.5 kB)

Building wheels for collected packages: visdom, pathtools, torchfile

Building wheel for visdom (setup.py) ... [?25l[?25hdone

Created wheel for visdom: filename=visdom-0.1.8.9-py3-none-any.whl size=655250 sha256=0e1903261a34333198000874ecc69509735c908dede660d8330da0b436047a2c

Stored in directory: /root/.cache/pip/wheels/2d/d1/9b/cde923274eac9cbb6ff0d8c7c72fe30a3da9095a38fd50bbf1

Building wheel for pathtools (setup.py) ... [?25l[?25hdone

Created wheel for pathtools: filename=pathtools-0.1.2-py3-none-any.whl size=8806 sha256=e3f4ac3e14ea521630f3b8382e1f9a9eb7e5aa4ca490a4702997bfd65ccc5789

Stored in directory: /root/.cache/pip/wheels/3e/31/09/fa59cef12cdcfecc627b3d24273699f390e71828921b2cbba2

Building wheel for torchfile (setup.py) ... [?25l[?25hdone

Created wheel for torchfile: filename=torchfile-0.1.0-py3-none-any.whl size=5709 sha256=4c78a2b4d1be5777a06aa37f207dd29ac50fc8de7df1c6695fa5bb08ca9476ab

Stored in directory: /root/.cache/pip/wheels/ac/5c/3a/a80e1c65880945c71fd833408cd1e9a8cb7e2f8f37620bb75b

Successfully built visdom pathtools torchfile

Installing collected packages: smmap, jsonpointer, gitdb, yaspin, websocket-client, torchfile, shortuuid, setproctitle, sentry-sdk, pathtools, jsonpatch, GitPython, docker-pycreds, wandb, visdom, dominate

Successfully installed GitPython-3.1.27 docker-pycreds-0.4.0 dominate-2.6.0 gitdb-4.0.9 jsonpatch-1.32 jsonpointer-2.2 pathtools-0.1.2 sentry-sdk-1.5.8 setproctitle-1.2.2 shortuuid-1.0.8 smmap-5.0.0 torchfile-0.1.0 visdom-0.1.8.9 wandb-0.12.11 websocket-client-1.3.1 yaspin-2.1.0

!bash ./datasets/download_pix2pix_dataset.sh facades

Specified [facades]

WARNING: timestamping does nothing in combination with -O. See the manual

for details.

--2022-03-21 12:00:38-- http://efrosgans.eecs.berkeley.edu/pix2pix/datasets/facades.tar.gz

Resolving efrosgans.eecs.berkeley.edu (efrosgans.eecs.berkeley.edu)... 128.32.244.190

Connecting to efrosgans.eecs.berkeley.edu (efrosgans.eecs.berkeley.edu)|128.32.244.190|:80... connected.

HTTP request sent, awaiting response... 200 OK

Length: 30168306 (29M) [application/x-gzip]

Saving to: ‘./datasets/facades.tar.gz’

./datasets/facades. 100%[===================>] 28.77M 2.45MB/s in 18s

2022-03-21 12:00:56 (1.57 MB/s) - ‘./datasets/facades.tar.gz’ saved [30168306/30168306]

facades/

facades/test/

facades/test/27.jpg

facades/test/5.jpg

facades/test/72.jpg

facades/test/1.jpg

facades/test/10.jpg

facades/test/100.jpg

facades/test/101.jpg

facades/test/102.jpg

facades/test/103.jpg

facades/test/104.jpg

facades/test/105.jpg

facades/test/106.jpg

facades/test/11.jpg

facades/test/12.jpg

facades/test/13.jpg

facades/test/14.jpg

facades/test/15.jpg

facades/test/16.jpg

facades/test/17.jpg

facades/test/18.jpg

facades/test/19.jpg

facades/test/2.jpg

facades/test/20.jpg

facades/test/21.jpg

facades/test/22.jpg

facades/test/23.jpg

facades/test/24.jpg

facades/test/25.jpg

facades/test/26.jpg

facades/test/50.jpg

facades/test/51.jpg

facades/test/52.jpg

facades/test/53.jpg

facades/test/54.jpg

facades/test/55.jpg

facades/test/56.jpg

facades/test/57.jpg

facades/test/58.jpg

facades/test/59.jpg

facades/test/6.jpg

facades/test/60.jpg

facades/test/61.jpg

facades/test/62.jpg

facades/test/63.jpg

facades/test/64.jpg

facades/test/65.jpg

facades/test/66.jpg

facades/test/67.jpg

facades/test/68.jpg

facades/test/69.jpg

facades/test/7.jpg

facades/test/70.jpg

facades/test/71.jpg

facades/test/73.jpg

facades/test/74.jpg

facades/test/75.jpg

facades/test/76.jpg

facades/test/77.jpg

facades/test/78.jpg

facades/test/79.jpg

facades/test/8.jpg

facades/test/80.jpg

facades/test/81.jpg

facades/test/82.jpg

facades/test/83.jpg

facades/test/84.jpg

facades/test/85.jpg

facades/test/86.jpg

facades/test/87.jpg

facades/test/88.jpg

facades/test/89.jpg

facades/test/9.jpg

facades/test/90.jpg

facades/test/91.jpg

facades/test/92.jpg

facades/test/93.jpg

facades/test/94.jpg

facades/test/95.jpg

facades/test/96.jpg

facades/test/97.jpg

facades/test/98.jpg

facades/test/99.jpg

facades/test/28.jpg

facades/test/29.jpg

facades/test/3.jpg

facades/test/30.jpg

facades/test/31.jpg

facades/test/32.jpg

facades/test/33.jpg

facades/test/34.jpg

facades/test/35.jpg

facades/test/36.jpg

facades/test/37.jpg

facades/test/38.jpg

facades/test/39.jpg

facades/test/4.jpg

facades/test/40.jpg

facades/test/41.jpg

facades/test/42.jpg

facades/test/43.jpg

facades/test/44.jpg

facades/test/45.jpg

facades/test/46.jpg

facades/test/47.jpg

facades/test/48.jpg

facades/test/49.jpg

facades/train/

facades/train/1.jpg

facades/train/10.jpg

facades/train/100.jpg

facades/train/101.jpg

facades/train/102.jpg

facades/train/103.jpg

facades/train/104.jpg

facades/train/105.jpg

facades/train/106.jpg

facades/train/107.jpg

facades/train/108.jpg

facades/train/109.jpg

facades/train/11.jpg

facades/train/110.jpg

facades/train/111.jpg

facades/train/112.jpg

facades/train/113.jpg

facades/train/114.jpg

facades/train/115.jpg

facades/train/116.jpg

facades/train/117.jpg

facades/train/118.jpg

facades/train/119.jpg

facades/train/12.jpg

facades/train/120.jpg

facades/train/121.jpg

facades/train/122.jpg

facades/train/123.jpg

facades/train/124.jpg

facades/train/125.jpg

facades/train/126.jpg

facades/train/309.jpg

facades/train/31.jpg

facades/train/310.jpg

facades/train/311.jpg

facades/train/312.jpg

facades/train/313.jpg

facades/train/314.jpg

facades/train/315.jpg

facades/train/316.jpg

facades/train/317.jpg

facades/train/318.jpg

facades/train/319.jpg

facades/train/32.jpg

facades/train/320.jpg

facades/train/321.jpg

facades/train/322.jpg

facades/train/323.jpg

facades/train/324.jpg

facades/train/325.jpg

facades/train/326.jpg

facades/train/327.jpg

facades/train/328.jpg

facades/train/329.jpg

facades/train/390.jpg

facades/train/391.jpg

facades/train/392.jpg

facades/train/393.jpg

facades/train/394.jpg

facades/train/395.jpg

facades/train/396.jpg

facades/train/397.jpg

facades/train/398.jpg

facades/train/399.jpg

facades/train/4.jpg

facades/train/40.jpg

facades/train/400.jpg

facades/train/41.jpg

facades/train/42.jpg

facades/train/43.jpg

facades/train/44.jpg

facades/train/45.jpg

facades/train/46.jpg

facades/train/47.jpg

facades/train/48.jpg

facades/train/49.jpg

facades/train/5.jpg

facades/train/50.jpg

facades/train/51.jpg

facades/train/52.jpg

facades/train/53.jpg

facades/train/54.jpg

facades/train/55.jpg

facades/train/56.jpg

facades/train/57.jpg

facades/train/58.jpg

facades/train/59.jpg

facades/train/6.jpg

facades/train/60.jpg

facades/train/61.jpg

facades/train/222.jpg

facades/train/223.jpg

facades/train/224.jpg

facades/train/225.jpg

facades/train/226.jpg

facades/train/227.jpg

facades/train/228.jpg

facades/train/229.jpg

facades/train/23.jpg

facades/train/230.jpg

facades/train/231.jpg

facades/train/232.jpg

facades/train/233.jpg

facades/train/234.jpg

facades/train/235.jpg

facades/train/236.jpg

facades/train/237.jpg

facades/train/238.jpg

facades/train/239.jpg

facades/train/24.jpg

facades/train/240.jpg

facades/train/241.jpg

facades/train/242.jpg

facades/train/243.jpg

facades/train/244.jpg

facades/train/245.jpg

facades/train/156.jpg

facades/train/157.jpg

facades/train/158.jpg

facades/train/159.jpg

facades/train/16.jpg

facades/train/160.jpg

facades/train/161.jpg

facades/train/162.jpg

facades/train/163.jpg

facades/train/164.jpg

facades/train/165.jpg

facades/train/166.jpg

facades/train/167.jpg

facades/train/168.jpg

facades/train/169.jpg

facades/train/17.jpg

facades/train/170.jpg

facades/train/171.jpg

facades/train/172.jpg

facades/train/173.jpg

facades/train/174.jpg

facades/train/175.jpg

facades/train/176.jpg

facades/train/177.jpg

facades/train/178.jpg

facades/train/179.jpg

facades/train/18.jpg

facades/train/180.jpg

facades/train/181.jpg

facades/train/182.jpg

facades/train/183.jpg

facades/train/184.jpg

facades/train/185.jpg

facades/train/186.jpg

facades/train/187.jpg

facades/train/188.jpg

facades/train/189.jpg

facades/train/19.jpg

facades/train/127.jpg

facades/train/155.jpg

facades/train/190.jpg

facades/train/221.jpg

facades/train/246.jpg

facades/train/27.jpg

facades/train/29.jpg

facades/train/308.jpg

facades/train/33.jpg

facades/train/350.jpg

facades/train/370.jpg

facades/train/39.jpg

facades/train/62.jpg

facades/train/270.jpg

facades/train/271.jpg

facades/train/272.jpg

facades/train/273.jpg

facades/train/274.jpg

facades/train/275.jpg

facades/train/276.jpg

facades/train/277.jpg

facades/train/278.jpg

facades/train/279.jpg

facades/train/28.jpg

facades/train/280.jpg

facades/train/281.jpg

facades/train/282.jpg

facades/train/283.jpg

facades/train/284.jpg

facades/train/285.jpg

facades/train/286.jpg

facades/train/287.jpg

facades/train/288.jpg

facades/train/289.jpg

facades/train/351.jpg

facades/train/352.jpg

facades/train/353.jpg

facades/train/354.jpg

facades/train/355.jpg

facades/train/356.jpg

facades/train/357.jpg

facades/train/358.jpg

facades/train/359.jpg

facades/train/36.jpg

facades/train/360.jpg

facades/train/361.jpg

facades/train/362.jpg

facades/train/363.jpg

facades/train/364.jpg

facades/train/365.jpg

facades/train/366.jpg

facades/train/367.jpg

facades/train/368.jpg

facades/train/369.jpg

facades/train/37.jpg

facades/train/63.jpg

facades/train/64.jpg

facades/train/65.jpg

facades/train/66.jpg

facades/train/67.jpg

facades/train/68.jpg

facades/train/69.jpg

facades/train/7.jpg

facades/train/70.jpg

facades/train/71.jpg

facades/train/72.jpg

facades/train/73.jpg

facades/train/74.jpg

facades/train/75.jpg

facades/train/76.jpg

facades/train/77.jpg

facades/train/78.jpg

facades/train/79.jpg

facades/train/8.jpg

facades/train/80.jpg

facades/train/81.jpg

facades/train/82.jpg

facades/train/83.jpg

facades/train/84.jpg

facades/train/85.jpg

facades/train/86.jpg

facades/train/87.jpg

facades/train/88.jpg

facades/train/89.jpg

facades/train/9.jpg

facades/train/90.jpg

facades/train/91.jpg

facades/train/92.jpg

facades/train/93.jpg

facades/train/94.jpg

facades/train/95.jpg

facades/train/96.jpg

facades/train/97.jpg

facades/train/98.jpg

facades/train/99.jpg

facades/train/128.jpg

facades/train/129.jpg

facades/train/13.jpg

facades/train/130.jpg

facades/train/131.jpg

facades/train/132.jpg

facades/train/133.jpg

facades/train/134.jpg

facades/train/135.jpg

facades/train/136.jpg

facades/train/137.jpg

facades/train/138.jpg

facades/train/139.jpg

facades/train/14.jpg

facades/train/140.jpg

facades/train/141.jpg

facades/train/142.jpg

facades/train/143.jpg

facades/train/144.jpg

facades/train/145.jpg

facades/train/146.jpg

facades/train/147.jpg

facades/train/148.jpg

facades/train/149.jpg

facades/train/15.jpg

facades/train/150.jpg

facades/train/151.jpg

facades/train/152.jpg

facades/train/153.jpg

facades/train/154.jpg

facades/train/191.jpg

facades/train/192.jpg

facades/train/193.jpg

facades/train/194.jpg

facades/train/195.jpg

facades/train/196.jpg

facades/train/197.jpg

facades/train/198.jpg

facades/train/199.jpg

facades/train/2.jpg

facades/train/20.jpg

facades/train/200.jpg

facades/train/201.jpg

facades/train/202.jpg

facades/train/203.jpg

facades/train/204.jpg

facades/train/205.jpg

facades/train/206.jpg

facades/train/207.jpg

facades/train/208.jpg

facades/train/209.jpg

facades/train/21.jpg

facades/train/210.jpg

facades/train/211.jpg

facades/train/212.jpg

facades/train/213.jpg

facades/train/214.jpg

facades/train/215.jpg

facades/train/216.jpg

facades/train/217.jpg

facades/train/218.jpg

facades/train/219.jpg

facades/train/22.jpg

facades/train/220.jpg

facades/train/247.jpg

facades/train/248.jpg

facades/train/249.jpg

facades/train/25.jpg

facades/train/250.jpg

facades/train/251.jpg

facades/train/252.jpg

facades/train/253.jpg

facades/train/254.jpg

facades/train/255.jpg

facades/train/256.jpg

facades/train/257.jpg

facades/train/258.jpg

facades/train/259.jpg

facades/train/26.jpg

facades/train/260.jpg

facades/train/261.jpg

facades/train/262.jpg

facades/train/263.jpg

facades/train/264.jpg

facades/train/265.jpg

facades/train/266.jpg

facades/train/267.jpg

facades/train/268.jpg

facades/train/269.jpg

facades/train/330.jpg

facades/train/331.jpg

facades/train/332.jpg

facades/train/333.jpg

facades/train/334.jpg

facades/train/335.jpg

facades/train/336.jpg

facades/train/337.jpg

facades/train/338.jpg

facades/train/339.jpg

facades/train/34.jpg

facades/train/340.jpg

facades/train/341.jpg

facades/train/342.jpg

facades/train/343.jpg

facades/train/344.jpg

facades/train/345.jpg

facades/train/346.jpg

facades/train/347.jpg

facades/train/348.jpg

facades/train/349.jpg

facades/train/35.jpg

facades/train/290.jpg

facades/train/291.jpg

facades/train/292.jpg

facades/train/293.jpg

facades/train/294.jpg

facades/train/295.jpg

facades/train/296.jpg

facades/train/297.jpg

facades/train/298.jpg

facades/train/299.jpg

facades/train/3.jpg

facades/train/30.jpg

facades/train/300.jpg

facades/train/301.jpg

facades/train/302.jpg

facades/train/303.jpg

facades/train/304.jpg

facades/train/305.jpg

facades/train/306.jpg

facades/train/307.jpg

facades/train/371.jpg

facades/train/372.jpg

facades/train/373.jpg

facades/train/374.jpg

facades/train/375.jpg

facades/train/376.jpg

facades/train/377.jpg

facades/train/378.jpg

facades/train/379.jpg

facades/train/38.jpg

facades/train/380.jpg

facades/train/381.jpg

facades/train/382.jpg

facades/train/383.jpg

facades/train/384.jpg

facades/train/385.jpg

facades/train/386.jpg

facades/train/387.jpg

facades/train/388.jpg

facades/train/389.jpg

facades/val/

facades/val/30.jpg

facades/val/50.jpg

facades/val/73.jpg

facades/val/1.jpg

facades/val/10.jpg

facades/val/100.jpg

facades/val/11.jpg

facades/val/12.jpg

facades/val/13.jpg

facades/val/14.jpg

facades/val/15.jpg

facades/val/16.jpg

facades/val/17.jpg

facades/val/18.jpg

facades/val/19.jpg

facades/val/2.jpg

facades/val/20.jpg

facades/val/21.jpg

facades/val/22.jpg

facades/val/23.jpg

facades/val/24.jpg

facades/val/25.jpg

facades/val/26.jpg

facades/val/27.jpg

facades/val/28.jpg

facades/val/29.jpg

facades/val/3.jpg

facades/val/51.jpg

facades/val/52.jpg

facades/val/53.jpg

facades/val/54.jpg

facades/val/55.jpg

facades/val/56.jpg

facades/val/57.jpg

facades/val/58.jpg

facades/val/59.jpg

facades/val/6.jpg

facades/val/60.jpg

facades/val/61.jpg

facades/val/62.jpg

facades/val/63.jpg

facades/val/64.jpg

facades/val/65.jpg

facades/val/66.jpg

facades/val/67.jpg

facades/val/68.jpg

facades/val/69.jpg

facades/val/7.jpg

facades/val/70.jpg

facades/val/71.jpg

facades/val/72.jpg

facades/val/74.jpg

facades/val/75.jpg

facades/val/76.jpg

facades/val/77.jpg

facades/val/78.jpg

facades/val/79.jpg

facades/val/8.jpg

facades/val/80.jpg

facades/val/81.jpg

facades/val/82.jpg

facades/val/83.jpg

facades/val/84.jpg

facades/val/85.jpg

facades/val/86.jpg

facades/val/87.jpg

facades/val/88.jpg

facades/val/89.jpg

facades/val/9.jpg

facades/val/90.jpg

facades/val/91.jpg

facades/val/92.jpg

facades/val/93.jpg

facades/val/94.jpg

facades/val/95.jpg

facades/val/96.jpg

facades/val/97.jpg

facades/val/98.jpg

facades/val/99.jpg

facades/val/31.jpg

facades/val/32.jpg

facades/val/33.jpg

facades/val/34.jpg

facades/val/35.jpg

facades/val/36.jpg

facades/val/37.jpg

facades/val/38.jpg

facades/val/39.jpg

facades/val/4.jpg

facades/val/40.jpg

facades/val/41.jpg

facades/val/42.jpg

facades/val/43.jpg

facades/val/44.jpg

facades/val/45.jpg

facades/val/46.jpg

facades/val/47.jpg

facades/val/48.jpg

facades/val/49.jpg

facades/val/5.jpg

필요 라이브러리 불러오기

- pytorch 라이브러리 load

import os

import glob

import numpy as np

import matplotlib.pyplot as plt

from PIL import Image

import torch

import torch.nn as nn

from torch.utils.data import Dataset

from torch.utils.data import DataLoader

import torchvision.transforms as transforms

from torchvision.utils import save_image

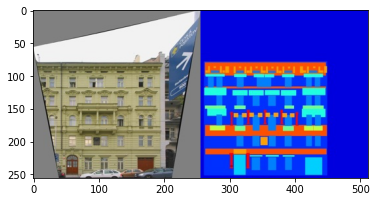

- 학습 데이터셋 출력해 보기

- 각 학습 이미지는 (256X256) 크기의 이미지 2개를 이어 붙인 형태를 가진다(paired)

print("학습 데이터셋 A와 B의 개수:", len(next(os.walk('/content/facades/train/'))[2]))

print("평가 데이터셋 A와 B의 개수:", len(next(os.walk('/content/facades/val/'))[2]))

print("테스트 데이터셋 A와 B의 개수:", len(next(os.walk('/content/facades/test/'))[2]))

학습 데이터셋 A와 B의 개수: 400

평가 데이터셋 A와 B의 개수: 100

테스트 데이터셋 A와 B의 개수: 106

# 한쌍만 출력해보자(왼쪽은 정답/target, 오른쪽은 조건/condition)

image = Image.open('/content/facades/train/9.jpg')

print("이미지 크기: ", image.size)

plt.imshow(image)

plt.show()

이미지 크기: (512, 256)

- Custom Dataset 클래스 정의

# inherit from 'Dataset' library

class ImageDataset(Dataset):

# 데이터 읽기

def __init__(self,root,transforms_ =None, mode = "train"):

self.transform = transforms_

# glob.glob():

# 많은 파일들을 다뤄야 하는 파이썬 프로그램을 작성할 때, 특정한 패턴이나 확장자를 가진 파일들의 경로나 이름이 필요할 때가 있다.

# glob 모듈의 glob 함수는 사용자가 제시한 조건에 맞는 파일명을 리스트 형식으로 반환한다.

# 단, 조건에 정규식을 사용할 수 없으며 엑셀 등에서도 사용할 수 있는 '*'와 '?'같은 와일드카드만을 지원한다.

# sorted():

# 또 sorted() 라는 내장 함수는 이터러블 객체로부터 정렬된 리스트를 생성한다.

self.files = sorted(glob.glob(os.path.join(root,mode) + "/*.jpg"))

# 데이터 갯수가 적기 때문에 테스트 데이터를 학습시기에 사용

if mode == "train":

self.files.extend(sorted(glob.glob(os.path.join(root,"test") + "/*.jpg")))

# index번째 샘플을 찾는데 사용된다. 이미지판독

def __getitem__(self, index):

img = Image.open(self.files[index % len(self.files)])

w, h = img.size

img_A = img.crop((0,0,w/2,h))

img_B = img.crop((w/2,0,w,h))

# Data augmentation을 위해 좌우반전(horizontal flips)

if np.random.random() < 0.5:

# Image.fromarray()함수를 사용하여 배열을 PIL 이미지 객체로 다시 변환

img_A = Image.fromarray(np.array(img_A)[:,::-1,:], "RGB")

img_B = Image.fromarray(np.array(img_B)[:,::-1,:], "RGB")

img_A = self.transform(img_A)

img_B = self.transform(img_B)

return {"A": img_A, "B": img_B}

def __len__(self):

return len(self.files)

# 이미지 변형(전처리)

transforms_ = transforms.Compose([

# 이미지 사이즈를 size로 변경한다

transforms.Resize((256,256), Image.BICUBIC),

# transforms.ToTensor() - 이미지 데이터를 tensor로 바꿔준다. / numpy 이미지에서 torch 이미지로 변경

transforms.ToTensor(),

# transforms.Normalize(mean, std, inplace=False) - 이미지를 정규화한다.(정규분포로)

transforms.Normalize((0.5,0.5,0.5), (0.5,0.5,0.5))

])

# 정의한 custom class로 데이터 관리

train_dataset = ImageDataset("/content/facades", transforms_= transforms_)

val_dataset = ImageDataset("/content/facades", transforms_= transforms_)

train_dataloader = DataLoader(train_dataset, batch_size=10, shuffle=True, num_workers=4)

val_dataloader = DataLoader(val_dataset, batch_size=10, shuffle=True, num_workers=4)

/usr/local/lib/python3.7/dist-packages/torchvision/transforms/transforms.py:288: UserWarning: Argument interpolation should be of type InterpolationMode instead of int. Please, use InterpolationMode enum.

"Argument interpolation should be of type InterpolationMode instead of int. "

/usr/local/lib/python3.7/dist-packages/torch/utils/data/dataloader.py:481: UserWarning: This DataLoader will create 4 worker processes in total. Our suggested max number of worker in current system is 2, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

cpuset_checked))

생성자 및 판별자 모델 정의

- pix2pix는 cGAN의 구조를 가지며, 이미지를 조건(condition)으로 입력 받아 이미지를 출력한다.

- 입력차원과 출력차원이 동일한 아키텍쳐로 U-Net을 사용한다.

- U-Net의 skip connection을 이용한다.

-

많은 low-level information이 입력과 출력 과정에서 공유될 수 있다.

- UNet의 구조는 위 그림과 같다.

- 많은 low-level information이 입력과 출력 과정에서 공유될 수 있다

- encoder 네트웤의 출력을 그대로 decoder의 입력에도 가져다 쓰는 방식이다.

- 일종의 residual learning 방식이기에, encoder의 정보 외에 학습할 것들만 학습하면 되어, 학습 난이도가 낮아지고 더 좋은 성능을 보이게 된다.

- UNet의 구조는 위 그림과 같다.

from torch.nn.modules import padding

# U-Net 아키텍쳐의 다운 샘플링(Down Sampling) 모듈

class UNetDown(nn.Module):

def __init__(self, in_channels, out_channels, normalize=True, dropout=0.0):

super(UNetDown, self).__init__()

# 일반적인 conv layer의 형태이다. 채널은 깊어지고, 너비 높이는 감소하는!(downsampling)

# 너비, 높이가 2배씩 감소

layers = [nn.Conv2d(in_channels, out_channels, kernel_size=4, stride=2, padding=1, bias=False)]

if normalize:

layers.append(nn.InstanceNorm2d(out_channels))

# LeakyReLU의 알파값이 0.2라는 소리, 입력이 음수일 때의 그래프의 기울기를 0.2로 설정한다는 의미.

layers.append(nn.LeakyReLU(0.2))

if dropout:

# dropout 은 해당 layer의 노드(weight)들 중 몇 퍼센트를 사용할 것인가를 결정

layers.append(nn.Dropout(dropout))

# layer 취합하여 model 하나로 만듬

self.model = nn.Sequential(*layers)

def forward(self,x):

return self.model(x)

# U-Net 아키텍쳐의 업 샘플링 모듈: skip Connection 사용한다.

# skip connection: 하나의 layer의 output을 몇 개의 layer를 건너뛰고 다음 layer의 input에 추가(concat)

class UNetUp(nn.Module):

def __init__(self, in_channels, out_channels, dropout=0.0):

super(UNetUp, self).__init__()

# 일반적인 T conv layer의 형태이다. 채널은 얕아지고, 너비 높이는 증가하는!(upsampling)

# 너비, 높이 2배씩 증가

layers = [nn.ConvTranspose2d(in_channels, out_channels, kernel_size=4, stride=2 , padding=1, bias=False)]

layers.append(nn.InstanceNorm2d(out_channels))

# inplace 하면 input으로 들어온 것 자체를 수정하겠다는 뜻. 메모리 usage가 좀 좋아짐. 하지만 input을 없앰.

layers.append(nn.ReLU(inplace=True))

if dropout:

layers.append(nn.Dropout(dropout))

self.model = nn.Sequential(*layers)

def forward(self, x, skip_input):

x = self.model(x)

# 위 UNetUp의 출력에 그대로 skip_input(encoder의 low-level info)을 더해줌

x = torch.cat((x,skip_input),1) # 채널 레벨에서 합치기(concatenation), 채널이 두꺼워짐

return x

# U-Net 생성자 아키텍쳐

class GeneratorUNet(nn.Module):

def __init__(self,in_channels=3,out_channels=3):

super(GeneratorUNet, self).__init__()

#encoder의 파트

self.down1 = UNetDown(in_channels, 64, normalize=False) #출력: [64X128X128]

self.down2 = UNetDown(64,128) #출력: [128X64X64]

self.down3 = UNetDown(128,256) #출력: [256X32X32]

self.down4 = UNetDown(256,512,dropout=0.5) #출력: [512X16X16]

self.down5 = UNetDown(512,512,dropout=0.5) #출력: [512X8X8]

self.down6 = UNetDown(512,512,dropout=0.5) #출력: [512X4X4]

self.down7 = UNetDown(512,512,dropout=0.5) #출력: [512X2X2]

self.down8 = UNetDown(512,512,normalize=False, dropout=0.5) #출력: [512X1X1], 그림상 중간 출력값

#decoder의 파트

#skip connection 사용(출력 채널의 크기 X 2 == 다음 입력 채널의 크기)

self.up1 = UNetUp(512,512,dropout=0.5) #출력: [1024X2X2]

self.up2 = UNetUp(1024,512,dropout=0.5) #출력: [1024X4X4]

self.up3 = UNetUp(1024,512,dropout=0.5) #출력: [1024X8X8]

self.up4 = UNetUp(1024,512,dropout=0.5) #출력: [1024X16X16]

self.up5 = UNetUp(1024,256) #출력: [512X32X32]

self.up6 = UNetUp(512,128) #출력: [256X64X64]

self.up7 = UNetUp(256,64) #출력: [128X128X128]

self.final = nn.Sequential(

# 출력 layer 크기 계산

# OH = (H + 2P - FH) / S + 1

# OW = (W + 2P - FW) / S + 1

nn.Upsample(scale_factor=2), # 출력:[128X256X256]

# pad_right, pad_left, pad_top, pad_bot

nn.ZeroPad2d((1,0,1,0)),

# 128 X 4 X 4 짜리 kernel이 3개 적용되서 output channel이 3이 나오게 될 것

nn.Conv2d(128,out_channels,kernel_size=4,padding=1), #출력: [3X256X256]

# 각 채널 별 256X256 이미지 pixel마다 Tanh()가 적용되어 -1과 1사이 출력을..!

nn.Tanh(),

)

def forward(self,x):

#인코더부터 디코더까지 순전파하는 U-Net 생성자(Generator)

d1 = self.down1(x)

d2 = self.down2(d1)

d3 = self.down3(d2)

d4 = self.down4(d3)

d5 = self.down5(d4)

d6 = self.down6(d5)

d7 = self.down7(d6)

d8 = self.down8(d7)

# 오른쪽 input은 skip_input으로 출력값에 그대로 채널 레벨로 더해진다(그림참고)

u1 = self.up1(d8,d7)

u2 = self.up2(u1,d6)

u3 = self.up3(u2,d5)

u4 = self.up4(u3,d4)

u5 = self.up5(u4,d3)

u6 = self.up6(u5,d2)

u7 = self.up7(u6,d1)

return self.final(u7)

class Discriminator(nn.Module):

def __init__(self, in_channels=3):

super(Discriminator,self).__init__()

def discriminator_block(in_channels, out_channels, normalization=True):

#너비와 높이가 2배씩 감소

layers = [nn.Conv2d(in_channels, out_channels, kernel_size=4, stride=2, padding=1)]

if normalization:

layers.append(nn.InstanceNorm2d(out_channels))

layers.append(nn.LeakyReLU(0.2, inplace=True))

return layers

self.model = nn.Sequential(

#두 개의 이미지(실제/변환된 이미지, 조건 이미지)를 한꺼번에 입력 받으므로 입력 채널의 크기는 2배(6)가 된다

*discriminator_block(in_channels *2, 64, normalization=False),# 출력: [64 X 128 X 128]

*discriminator_block(64, 128), # 출력: [128 X 64 X 64]

*discriminator_block(128,256), # 출력: [256 X 32 X 32]

*discriminator_block(256,512), # 출력: [512 X 16 X 16]

nn.ZeroPad2d((1,0,1,0)),

nn.Conv2d(512,1,kernel_size=4,padding=1,bias=False) #출력[1X16X16]

)

#img_A: 실제/변환된 이미지, img_B: 조건(condition)

def forward(self, img_A, img_B):

#이미지 두개를 채널 레벨에서 연결하여(concatenate) 입력 데이터 생성

img_input = torch.cat((img_A,img_B),1)

return self.model(img_input)

모델 학습 및 샘플링

- 학습을 위해 G와 D 모델 초기화

- 적절한 하이퍼파라미터 설정

- 적절한 손실 함수 사용

- pix2pix는 L1 loss를 이용해서 출력 이미지가 ground-truth와 유사해질 수 있도록 노력(blurry함 감소)

def weights_init_normal(m):

# __name__ : 사용되는 현재 모듈의 이름을 제공하는 특수 내장 변수

classname = m.__class__.__name__

# find()는 찾는 해당 문자열이 없으면 -1을 반환

# "Conv"라면

if classname.find("Conv") != -1:

torch.nn.init.normal_(m.weight.data, 0.0, 0.02)

# "BatchNorm2d"라면

elif classname.find("BatchNorm2d") != -1:

# 정규분포에서 sampling한 값으로 초기화 (mean: 1.0 / std = 0.02의 정규뷴포에서)

# 주어진 2차원의 tensor(=m.weight.data)를 정규분포에서 뽑은 값으로 초기화한다.

# 이 작업에서 gradient가 기록되지는 않는다.

torch.nn.init.normal_(m.weight.data, 1.0, 0.02)

# 명시한 scalr 값으로 tensor를 inplace로 채움

torch.nn.init.constant_(m.bias.data, 0.0)

# 생성자와 판별자 초기화

generator = GeneratorUNet()

discriminator = Discriminator()

# This package adds support for CUDA tensor types,

# that implement the same function as CPU tensors,

# ,but they utilize GPUs for computation.

generator.cuda()

discriminator.cuda()

# 가중치 초기화 / apply(fn)

# Applies fn recursively to every submodule (as returned by .children()) as well as self.

# Typical use includes initializing the parameters of a model

generator.apply(weights_init_normal)

discriminator.apply(weights_init_normal)

# 손실 함수(loss function)

# cGAN을 위해 MSE loss를 사용한다

# 실제 정답 이미지와 비슷해지기 위해 pixel wise로 L1 loss 사용

criterion_GAN = torch.nn.MSELoss()

criterion_pixelwise = torch.nn.L1Loss()

criterion_GAN.cuda()

criterion_pixelwise.cuda()

# 학습률 설정

lr = 0.0002

# 생성자와 판별자를 위한 최적화 함수

# parameters(): Returns an iterator over module parameters. This is typically passed to an optimizer.

# betas : coefficients used for computing running averages of gradient and its square

optimizer_G = torch.optim.Adam(generator.parameters(), lr=lr, betas=(0.5,0.999))

optimizer_D = torch.optim.Adam(discriminator.parameters(), lr=lr, betas=(0.5,0.999))

- 모델을 학습하면서 주기적으로 샘플링하여 결과를 확인할 수 있다.

import time

n_epochs = 200 # 학습의 횟수(epoch) 설정

sample_interval = 200 # 몇 번의 배치(batch)마다 결과를 출력할 것인지 설정

# 변환된 이미지와 정답 이미지 사이의 L1 픽셀 단위(Pixel-wise) 손실 가중치(weight) 파라미터

lambda_pixel = 100

start_time = time.time()

for epoch in range(n_epochs):

# enumerate는 튜플로 iterate한다.

# unpacking 시켜서 보통 loop을 돌린다(아래 i, batch 처럼)

# i는 인덱스로 0,1,2 ,,, 로

# batch는 해당 train_dataloader 리스트의 iterable 객체를(보통 string) 함께 iterate한다

# ex) (0, "A") , (1, "B")

for i, batch in enumerate(train_dataloader):

########################################################

# 모델의 입력(input) 데이터 불러오기

# train_dataloader는 ImageDataset을 input으로 불러온 데이터셋

#: {"A": img_A, "B": img_B} 형태를 return한다.

# img_A: 실제 / 변환생성된 이미지

# img_B: 조건 이미지

# 정답 조건이미지

real_A = batch["B"].cuda()

# 정답 변환이미지

real_B = batch["A"].cuda()

########################################################

real = torch.cuda.FloatTensor(real_A.size(0), 1, 16, 16).fill_(1.0) # 진짜(real): 1

fake = torch.cuda.FloatTensor(real_B.size(0), 1, 16, 16).fill_(0.0) # 가짜(fake): 0

""" 생성자를 학습시킨다 """

# Sets the gradients of all optimized torch.Tensor s to zero.

optimizer_G.zero_grad()

# 주어진 조건 real_A 이미지를 갖고 변환 생성한 가짜 이미지

fake_B = generator(real_A)

# 생성자의 손실 계산

# criterion_GAN은 torch.nn.MSELoss()이다 즉, label 'real'과의 Loss를 계산

# fake_B와 real_A를 channel 레벨로 concat

loss_GAN = criterion_GAN(discriminator(fake_B, real_A), real)

# 픽셀 단위 L1 손실 값 계산

# torch.nn.L1Loss()이다. 'real_B'즉, 정답 변환이미지와 생성한 변환이미지 사이 L1 loss 계산

loss_pixel = criterion_pixelwise(fake_B, real_B)

########################################################

# 최종 loss

loss_G = loss_GAN + lambda_pixel * loss_pixel

########################################################

########################################################

# 생성자 업데이트

# pytorch의 automatic gradient package인 autograd의 함수를 쓰는 것

# 즉, 이 loss(scalar)값을 모든 가중치에 대해 미분한다.

# backward()는 gradient를 구하려고 하는 변수들에 대해 Dynamic computational graph에 차곡차곡 neural net의 계산 흐름을 쌓아 둔다

loss_G.backward()

# step(): Performs a single optimization step.

optimizer_G.step()

########################################################

""" 판별자를 학습시킨다 """

optimizer_D.zero_grad()

# 판별자의 손실 값 계산

loss_real = criterion_GAN(discriminator(real_B, real_A), real) #조건: real_A

loss_fake = criterion_GAN(discriminator(fake_B.detach(), real_A), fake)

loss_D = (loss_real + loss_fake) /2

# 판별자 업데이트

loss_D.backward()

optimizer_D.step()

done = epoch*len(train_dataloader) + i

if done % sample_interval == 0:

imgs = next(iter(val_dataloader)) # 10개 이미지 추출하여 생성

real_A = imgs["B"].cuda()

real_B = imgs["A"].cuda()

fake_B = generator(real_A)

# real_A : 조건(condition), fake_B: 변환된 이미지, real_B: 정답 변환 이미지

img_sample = torch.cat((real_A.data, fake_B.data, real_B.data), -2) # 높이(height)를 기준으로

save_image(img_sample, f"{done}.png", nrow=5, normalize=True)

print(f"[Epoch {epoch}/{n_epochs}] [D loss: {loss_D.item():.6f}] [G pixel loss: {loss_pixel.item():.6f}, adv loss: {loss_GAN.item()}] [Elapsed time: {time.time() - start_time:.2f}s]")

/usr/local/lib/python3.7/dist-packages/torch/utils/data/dataloader.py:481: UserWarning: This DataLoader will create 4 worker processes in total. Our suggested max number of worker in current system is 2, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

cpuset_checked))

[Epoch 0/200] [D loss: 0.381288] [G pixel loss: 0.376942, adv loss: 0.6545624732971191] [Elapsed time: 49.09s]

[Epoch 1/200] [D loss: 0.192580] [G pixel loss: 0.355091, adv loss: 0.7536435127258301] [Elapsed time: 97.05s]

[Epoch 2/200] [D loss: 0.149096] [G pixel loss: 0.345436, adv loss: 0.7810912132263184] [Elapsed time: 145.01s]

[Epoch 3/200] [D loss: 0.225314] [G pixel loss: 0.341125, adv loss: 1.3068674802780151] [Elapsed time: 193.78s]

[Epoch 4/200] [D loss: 0.143116] [G pixel loss: 0.353989, adv loss: 0.5862461924552917] [Elapsed time: 241.68s]

[Epoch 5/200] [D loss: 0.054412] [G pixel loss: 0.331029, adv loss: 0.9602774381637573] [Elapsed time: 289.60s]

[Epoch 6/200] [D loss: 0.052271] [G pixel loss: 0.367796, adv loss: 0.7469775676727295] [Elapsed time: 337.40s]

[Epoch 7/200] [D loss: 0.174204] [G pixel loss: 0.325465, adv loss: 0.471322238445282] [Elapsed time: 386.14s]

[Epoch 8/200] [D loss: 0.078990] [G pixel loss: 0.341791, adv loss: 0.5842515230178833] [Elapsed time: 434.01s]

[Epoch 9/200] [D loss: 0.063996] [G pixel loss: 0.342018, adv loss: 0.7872447967529297] [Elapsed time: 481.80s]

[Epoch 10/200] [D loss: 0.066601] [G pixel loss: 0.362324, adv loss: 0.6874423027038574] [Elapsed time: 529.70s]

[Epoch 11/200] [D loss: 0.076826] [G pixel loss: 0.289537, adv loss: 0.6844277381896973] [Elapsed time: 578.38s]

[Epoch 12/200] [D loss: 0.085551] [G pixel loss: 0.309521, adv loss: 0.9681031703948975] [Elapsed time: 626.23s]

[Epoch 13/200] [D loss: 0.039946] [G pixel loss: 0.394171, adv loss: 1.1878159046173096] [Elapsed time: 674.26s]

[Epoch 14/200] [D loss: 0.057577] [G pixel loss: 0.310740, adv loss: 0.9615353941917419] [Elapsed time: 722.18s]

[Epoch 15/200] [D loss: 0.050391] [G pixel loss: 0.368409, adv loss: 0.8237900733947754] [Elapsed time: 771.12s]

[Epoch 16/200] [D loss: 0.105165] [G pixel loss: 0.379902, adv loss: 0.6350650787353516] [Elapsed time: 819.03s]

[Epoch 17/200] [D loss: 0.041258] [G pixel loss: 0.309569, adv loss: 0.9446393847465515] [Elapsed time: 867.05s]

[Epoch 18/200] [D loss: 0.088850] [G pixel loss: 0.256369, adv loss: 1.3284671306610107] [Elapsed time: 914.94s]

[Epoch 19/200] [D loss: 0.065282] [G pixel loss: 0.315536, adv loss: 0.6887872219085693] [Elapsed time: 963.83s]

[Epoch 20/200] [D loss: 0.042505] [G pixel loss: 0.289952, adv loss: 0.8647300004959106] [Elapsed time: 1011.81s]

[Epoch 21/200] [D loss: 0.060231] [G pixel loss: 0.313320, adv loss: 0.7799541354179382] [Elapsed time: 1059.78s]

[Epoch 22/200] [D loss: 0.039047] [G pixel loss: 0.308499, adv loss: 0.7362779378890991] [Elapsed time: 1107.59s]

[Epoch 23/200] [D loss: 0.079212] [G pixel loss: 0.297559, adv loss: 0.6167521476745605] [Elapsed time: 1156.35s]

[Epoch 24/200] [D loss: 0.110600] [G pixel loss: 0.297773, adv loss: 1.5952587127685547] [Elapsed time: 1204.19s]

[Epoch 25/200] [D loss: 0.065958] [G pixel loss: 0.315930, adv loss: 0.5173804759979248] [Elapsed time: 1251.93s]

[Epoch 26/200] [D loss: 0.035456] [G pixel loss: 0.289858, adv loss: 1.190037727355957] [Elapsed time: 1299.71s]

[Epoch 27/200] [D loss: 0.051859] [G pixel loss: 0.293352, adv loss: 1.2759935855865479] [Elapsed time: 1348.53s]

[Epoch 28/200] [D loss: 0.067366] [G pixel loss: 0.299187, adv loss: 0.8222799301147461] [Elapsed time: 1396.56s]

[Epoch 29/200] [D loss: 0.068348] [G pixel loss: 0.255664, adv loss: 1.340897560119629] [Elapsed time: 1444.37s]

[Epoch 30/200] [D loss: 0.089547] [G pixel loss: 0.291004, adv loss: 0.5226625800132751] [Elapsed time: 1492.13s]

[Epoch 31/200] [D loss: 0.081532] [G pixel loss: 0.269906, adv loss: 0.7131866812705994] [Elapsed time: 1540.93s]

[Epoch 32/200] [D loss: 0.061981] [G pixel loss: 0.259353, adv loss: 0.6018949151039124] [Elapsed time: 1588.93s]

[Epoch 33/200] [D loss: 0.060490] [G pixel loss: 0.265419, adv loss: 0.6979140043258667] [Elapsed time: 1636.79s]

[Epoch 34/200] [D loss: 0.050280] [G pixel loss: 0.263957, adv loss: 1.1079914569854736] [Elapsed time: 1684.86s]

[Epoch 35/200] [D loss: 0.065202] [G pixel loss: 0.260654, adv loss: 0.5337224006652832] [Elapsed time: 1733.74s]

[Epoch 36/200] [D loss: 0.069398] [G pixel loss: 0.285417, adv loss: 0.5545682311058044] [Elapsed time: 1781.70s]

[Epoch 37/200] [D loss: 0.027014] [G pixel loss: 0.295575, adv loss: 0.8494169116020203] [Elapsed time: 1829.59s]

[Epoch 38/200] [D loss: 0.074418] [G pixel loss: 0.275254, adv loss: 0.5166867971420288] [Elapsed time: 1877.59s]

[Epoch 39/200] [D loss: 0.078900] [G pixel loss: 0.229593, adv loss: 1.2745400667190552] [Elapsed time: 1926.48s]

[Epoch 40/200] [D loss: 0.049240] [G pixel loss: 0.257130, adv loss: 0.7442737817764282] [Elapsed time: 1974.52s]

[Epoch 41/200] [D loss: 0.118156] [G pixel loss: 0.279518, adv loss: 0.4168187975883484] [Elapsed time: 2022.49s]

[Epoch 42/200] [D loss: 0.045100] [G pixel loss: 0.264872, adv loss: 0.6888045072555542] [Elapsed time: 2070.29s]

[Epoch 43/200] [D loss: 0.042551] [G pixel loss: 0.258340, adv loss: 0.7977311015129089] [Elapsed time: 2119.30s]

[Epoch 44/200] [D loss: 0.025458] [G pixel loss: 0.248376, adv loss: 1.0759673118591309] [Elapsed time: 2167.28s]

[Epoch 45/200] [D loss: 0.110448] [G pixel loss: 0.256910, adv loss: 0.38716554641723633] [Elapsed time: 2215.10s]

[Epoch 46/200] [D loss: 0.087167] [G pixel loss: 0.261619, adv loss: 1.1514577865600586] [Elapsed time: 2262.96s]

[Epoch 47/200] [D loss: 0.040348] [G pixel loss: 0.257065, adv loss: 1.0923411846160889] [Elapsed time: 2311.64s]

[Epoch 48/200] [D loss: 0.077049] [G pixel loss: 0.255525, adv loss: 1.0998553037643433] [Elapsed time: 2359.48s]

[Epoch 49/200] [D loss: 0.044824] [G pixel loss: 0.218836, adv loss: 1.2029449939727783] [Elapsed time: 2407.46s]

[Epoch 50/200] [D loss: 0.037130] [G pixel loss: 0.264936, adv loss: 0.93736332654953] [Elapsed time: 2456.60s]

[Epoch 51/200] [D loss: 0.035223] [G pixel loss: 0.235452, adv loss: 0.8149962425231934] [Elapsed time: 2504.60s]

[Epoch 52/200] [D loss: 0.028359] [G pixel loss: 0.212014, adv loss: 0.9162178039550781] [Elapsed time: 2552.50s]

[Epoch 53/200] [D loss: 0.115831] [G pixel loss: 0.228224, adv loss: 1.1170363426208496] [Elapsed time: 2600.35s]

[Epoch 54/200] [D loss: 0.029847] [G pixel loss: 0.233618, adv loss: 0.9819955825805664] [Elapsed time: 2649.14s]

[Epoch 55/200] [D loss: 0.041420] [G pixel loss: 0.227949, adv loss: 0.6626397371292114] [Elapsed time: 2697.16s]

[Epoch 56/200] [D loss: 0.043162] [G pixel loss: 0.245179, adv loss: 0.9243547320365906] [Elapsed time: 2745.11s]

[Epoch 57/200] [D loss: 0.042623] [G pixel loss: 0.225400, adv loss: 0.7696890830993652] [Elapsed time: 2793.19s]

[Epoch 58/200] [D loss: 0.038320] [G pixel loss: 0.239521, adv loss: 1.221077799797058] [Elapsed time: 2841.93s]

[Epoch 59/200] [D loss: 0.041262] [G pixel loss: 0.198182, adv loss: 0.8226012587547302] [Elapsed time: 2889.78s]

[Epoch 60/200] [D loss: 0.105454] [G pixel loss: 0.226028, adv loss: 0.4266689717769623] [Elapsed time: 2937.50s]

[Epoch 61/200] [D loss: 0.059939] [G pixel loss: 0.227845, adv loss: 0.7188699245452881] [Elapsed time: 2985.56s]

[Epoch 62/200] [D loss: 0.024269] [G pixel loss: 0.212256, adv loss: 0.9303908348083496] [Elapsed time: 3034.26s]

[Epoch 63/200] [D loss: 0.066482] [G pixel loss: 0.217997, adv loss: 0.839183509349823] [Elapsed time: 3082.26s]

[Epoch 64/200] [D loss: 0.080208] [G pixel loss: 0.228155, adv loss: 0.943450391292572] [Elapsed time: 3130.19s]

[Epoch 65/200] [D loss: 0.069581] [G pixel loss: 0.226142, adv loss: 0.5254068374633789] [Elapsed time: 3178.20s]

[Epoch 66/200] [D loss: 0.050510] [G pixel loss: 0.218243, adv loss: 1.1991784572601318] [Elapsed time: 3227.02s]

[Epoch 67/200] [D loss: 0.102993] [G pixel loss: 0.235961, adv loss: 1.3224527835845947] [Elapsed time: 3274.98s]

[Epoch 68/200] [D loss: 0.052456] [G pixel loss: 0.232487, adv loss: 0.9387426376342773] [Elapsed time: 3322.92s]

[Epoch 69/200] [D loss: 0.056939] [G pixel loss: 0.237723, adv loss: 0.5924131274223328] [Elapsed time: 3370.88s]

[Epoch 70/200] [D loss: 0.051492] [G pixel loss: 0.200669, adv loss: 0.883420467376709] [Elapsed time: 3419.79s]

[Epoch 71/200] [D loss: 0.092826] [G pixel loss: 0.228369, adv loss: 0.5278297662734985] [Elapsed time: 3467.64s]

[Epoch 72/200] [D loss: 0.038645] [G pixel loss: 0.182996, adv loss: 0.7274574041366577] [Elapsed time: 3515.41s]

[Epoch 73/200] [D loss: 0.035151] [G pixel loss: 0.239637, adv loss: 0.7851053476333618] [Elapsed time: 3563.28s]

[Epoch 74/200] [D loss: 0.055824] [G pixel loss: 0.205222, adv loss: 0.5974189043045044] [Elapsed time: 3612.04s]

[Epoch 75/200] [D loss: 0.029098] [G pixel loss: 0.216047, adv loss: 1.0696113109588623] [Elapsed time: 3659.88s]

[Epoch 76/200] [D loss: 0.041786] [G pixel loss: 0.205262, adv loss: 1.0595576763153076] [Elapsed time: 3707.85s]

[Epoch 77/200] [D loss: 0.054658] [G pixel loss: 0.200384, adv loss: 0.9168607592582703] [Elapsed time: 3755.88s]

[Epoch 78/200] [D loss: 0.037715] [G pixel loss: 0.221479, adv loss: 1.2193284034729004] [Elapsed time: 3804.59s]

[Epoch 79/200] [D loss: 0.032999] [G pixel loss: 0.225056, adv loss: 0.7432982325553894] [Elapsed time: 3852.51s]

[Epoch 80/200] [D loss: 0.028037] [G pixel loss: 0.233739, adv loss: 0.9614901542663574] [Elapsed time: 3900.45s]

[Epoch 81/200] [D loss: 0.066227] [G pixel loss: 0.211871, adv loss: 0.5838621854782104] [Elapsed time: 3948.24s]

[Epoch 82/200] [D loss: 0.086552] [G pixel loss: 0.222979, adv loss: 0.5007441639900208] [Elapsed time: 3997.05s]

[Epoch 83/200] [D loss: 0.020661] [G pixel loss: 0.191860, adv loss: 0.9374706149101257] [Elapsed time: 4045.01s]

[Epoch 84/200] [D loss: 0.029254] [G pixel loss: 0.223549, adv loss: 0.9884688854217529] [Elapsed time: 4092.92s]

[Epoch 85/200] [D loss: 0.063913] [G pixel loss: 0.188804, adv loss: 0.9794902801513672] [Elapsed time: 4140.78s]

[Epoch 86/200] [D loss: 0.032603] [G pixel loss: 0.207368, adv loss: 1.1193656921386719] [Elapsed time: 4189.52s]

[Epoch 87/200] [D loss: 0.050683] [G pixel loss: 0.198689, adv loss: 0.6600277423858643] [Elapsed time: 4237.52s]

[Epoch 88/200] [D loss: 0.052047] [G pixel loss: 0.184886, adv loss: 1.0955743789672852] [Elapsed time: 4285.33s]

[Epoch 89/200] [D loss: 0.032133] [G pixel loss: 0.213616, adv loss: 1.2789306640625] [Elapsed time: 4333.42s]

[Epoch 90/200] [D loss: 0.055240] [G pixel loss: 0.195986, adv loss: 0.7520836591720581] [Elapsed time: 4382.29s]

[Epoch 91/200] [D loss: 0.018873] [G pixel loss: 0.250362, adv loss: 0.9447711706161499] [Elapsed time: 4430.28s]

[Epoch 92/200] [D loss: 0.034829] [G pixel loss: 0.191047, adv loss: 1.0977423191070557] [Elapsed time: 4478.27s]

[Epoch 93/200] [D loss: 0.036279] [G pixel loss: 0.197346, adv loss: 0.9610500335693359] [Elapsed time: 4526.41s]

[Epoch 94/200] [D loss: 0.054154] [G pixel loss: 0.193912, adv loss: 1.1074565649032593] [Elapsed time: 4575.23s]

[Epoch 95/200] [D loss: 0.025659] [G pixel loss: 0.217339, adv loss: 0.8696063756942749] [Elapsed time: 4623.13s]

[Epoch 96/200] [D loss: 0.024971] [G pixel loss: 0.227698, adv loss: 1.3020386695861816] [Elapsed time: 4671.02s]

[Epoch 97/200] [D loss: 0.051727] [G pixel loss: 0.190971, adv loss: 1.073716402053833] [Elapsed time: 4719.07s]

[Epoch 98/200] [D loss: 0.092976] [G pixel loss: 0.185161, adv loss: 1.2253074645996094] [Elapsed time: 4767.86s]

[Epoch 99/200] [D loss: 0.030444] [G pixel loss: 0.194988, adv loss: 0.9471611976623535] [Elapsed time: 4815.69s]

[Epoch 100/200] [D loss: 0.022689] [G pixel loss: 0.182422, adv loss: 1.0049575567245483] [Elapsed time: 4863.62s]

[Epoch 101/200] [D loss: 0.033428] [G pixel loss: 0.220642, adv loss: 0.7620983123779297] [Elapsed time: 4912.43s]

[Epoch 102/200] [D loss: 0.027239] [G pixel loss: 0.190845, adv loss: 0.9133728742599487] [Elapsed time: 4960.32s]

[Epoch 103/200] [D loss: 0.054579] [G pixel loss: 0.192445, adv loss: 0.9957443475723267] [Elapsed time: 5008.10s]

[Epoch 104/200] [D loss: 0.031166] [G pixel loss: 0.195483, adv loss: 1.0524855852127075] [Elapsed time: 5056.14s]

[Epoch 105/200] [D loss: 0.134346] [G pixel loss: 0.185030, adv loss: 0.35340946912765503] [Elapsed time: 5104.96s]

[Epoch 106/200] [D loss: 0.036587] [G pixel loss: 0.194746, adv loss: 0.793824315071106] [Elapsed time: 5152.74s]

[Epoch 107/200] [D loss: 0.038521] [G pixel loss: 0.196390, adv loss: 1.0377753973007202] [Elapsed time: 5200.65s]

[Epoch 108/200] [D loss: 0.053480] [G pixel loss: 0.198559, adv loss: 0.6999761462211609] [Elapsed time: 5248.49s]

[Epoch 109/200] [D loss: 0.045676] [G pixel loss: 0.187569, adv loss: 0.6513010859489441] [Elapsed time: 5297.36s]

[Epoch 110/200] [D loss: 0.069132] [G pixel loss: 0.183542, adv loss: 0.6066778898239136] [Elapsed time: 5345.24s]

[Epoch 111/200] [D loss: 0.026282] [G pixel loss: 0.172196, adv loss: 0.8419086337089539] [Elapsed time: 5393.18s]

[Epoch 112/200] [D loss: 0.066565] [G pixel loss: 0.194839, adv loss: 0.898115336894989] [Elapsed time: 5441.21s]

[Epoch 113/200] [D loss: 0.018404] [G pixel loss: 0.195488, adv loss: 1.0069154500961304] [Elapsed time: 5490.05s]

[Epoch 114/200] [D loss: 0.034999] [G pixel loss: 0.174557, adv loss: 0.9553066492080688] [Elapsed time: 5538.05s]

[Epoch 115/200] [D loss: 0.028621] [G pixel loss: 0.201182, adv loss: 1.1179637908935547] [Elapsed time: 5585.99s]

[Epoch 116/200] [D loss: 0.033847] [G pixel loss: 0.207996, adv loss: 1.0239548683166504] [Elapsed time: 5633.97s]

[Epoch 117/200] [D loss: 3.286136] [G pixel loss: 0.196184, adv loss: 3.55122447013855] [Elapsed time: 5682.81s]

[Epoch 118/200] [D loss: 0.074369] [G pixel loss: 0.181119, adv loss: 0.7259426116943359] [Elapsed time: 5730.55s]

[Epoch 119/200] [D loss: 0.088055] [G pixel loss: 0.159730, adv loss: 1.0089730024337769] [Elapsed time: 5778.34s]

[Epoch 120/200] [D loss: 0.081245] [G pixel loss: 0.180452, adv loss: 1.197155237197876] [Elapsed time: 5826.11s]

[Epoch 121/200] [D loss: 0.026494] [G pixel loss: 0.188146, adv loss: 0.9939560890197754] [Elapsed time: 5874.89s]

[Epoch 122/200] [D loss: 0.040258] [G pixel loss: 0.164458, adv loss: 0.8700642585754395] [Elapsed time: 5922.78s]

[Epoch 123/200] [D loss: 0.024400] [G pixel loss: 0.190870, adv loss: 1.0706164836883545] [Elapsed time: 5970.63s]

[Epoch 124/200] [D loss: 0.029007] [G pixel loss: 0.192408, adv loss: 0.7650015354156494] [Elapsed time: 6018.56s]

[Epoch 125/200] [D loss: 0.045748] [G pixel loss: 0.163438, adv loss: 0.6413494348526001] [Elapsed time: 6067.22s]

[Epoch 126/200] [D loss: 0.041828] [G pixel loss: 0.177025, adv loss: 1.3107445240020752] [Elapsed time: 6115.22s]

[Epoch 127/200] [D loss: 0.055553] [G pixel loss: 0.180761, adv loss: 1.100488543510437] [Elapsed time: 6163.18s]

[Epoch 128/200] [D loss: 0.043807] [G pixel loss: 0.197879, adv loss: 1.1993601322174072] [Elapsed time: 6211.23s]

[Epoch 129/200] [D loss: 0.021107] [G pixel loss: 0.178910, adv loss: 1.0723681449890137] [Elapsed time: 6260.17s]

[Epoch 130/200] [D loss: 0.049353] [G pixel loss: 0.171260, adv loss: 1.2647234201431274] [Elapsed time: 6308.19s]

[Epoch 131/200] [D loss: 0.035712] [G pixel loss: 0.199795, adv loss: 0.9335449934005737] [Elapsed time: 6356.08s]

[Epoch 132/200] [D loss: 0.039342] [G pixel loss: 0.194386, adv loss: 0.8128072023391724] [Elapsed time: 6404.05s]

[Epoch 133/200] [D loss: 0.024539] [G pixel loss: 0.175065, adv loss: 0.7909610271453857] [Elapsed time: 6452.74s]

[Epoch 134/200] [D loss: 0.024563] [G pixel loss: 0.193471, adv loss: 1.0189177989959717] [Elapsed time: 6500.67s]

[Epoch 135/200] [D loss: 0.029262] [G pixel loss: 0.178664, adv loss: 0.7336101531982422] [Elapsed time: 6548.55s]

[Epoch 136/200] [D loss: 0.040150] [G pixel loss: 0.188662, adv loss: 0.6959328651428223] [Elapsed time: 6596.64s]

[Epoch 137/200] [D loss: 0.048452] [G pixel loss: 0.189568, adv loss: 0.6468292474746704] [Elapsed time: 6645.47s]

[Epoch 138/200] [D loss: 0.014524] [G pixel loss: 0.181803, adv loss: 0.9406778216362] [Elapsed time: 6693.48s]

[Epoch 139/200] [D loss: 0.016484] [G pixel loss: 0.186653, adv loss: 0.883161723613739] [Elapsed time: 6741.37s]

[Epoch 140/200] [D loss: 0.035722] [G pixel loss: 0.205902, adv loss: 0.7527605891227722] [Elapsed time: 6789.29s]

[Epoch 141/200] [D loss: 0.021733] [G pixel loss: 0.162444, adv loss: 0.8068457841873169] [Elapsed time: 6838.00s]

[Epoch 142/200] [D loss: 0.062122] [G pixel loss: 0.171460, adv loss: 1.2483985424041748] [Elapsed time: 6885.86s]

[Epoch 143/200] [D loss: 0.040015] [G pixel loss: 0.203298, adv loss: 1.3327226638793945] [Elapsed time: 6933.87s]

[Epoch 144/200] [D loss: 0.041189] [G pixel loss: 0.181297, adv loss: 0.8710848093032837] [Elapsed time: 6981.81s]

[Epoch 145/200] [D loss: 0.026046] [G pixel loss: 0.184190, adv loss: 0.8048621416091919] [Elapsed time: 7030.70s]

[Epoch 146/200] [D loss: 0.020195] [G pixel loss: 0.183135, adv loss: 0.9086407423019409] [Elapsed time: 7078.59s]

[Epoch 147/200] [D loss: 0.035239] [G pixel loss: 0.161495, adv loss: 1.1328773498535156] [Elapsed time: 7126.54s]

[Epoch 148/200] [D loss: 0.027274] [G pixel loss: 0.187972, adv loss: 0.8399996757507324] [Elapsed time: 7174.47s]

[Epoch 149/200] [D loss: 0.019699] [G pixel loss: 0.164747, adv loss: 1.0377000570297241] [Elapsed time: 7223.46s]

[Epoch 150/200] [D loss: 0.015246] [G pixel loss: 0.195141, adv loss: 1.0458558797836304] [Elapsed time: 7271.23s]

[Epoch 151/200] [D loss: 0.019110] [G pixel loss: 0.197082, adv loss: 0.9519748687744141] [Elapsed time: 7318.95s]

[Epoch 152/200] [D loss: 0.056855] [G pixel loss: 0.182716, adv loss: 0.6151430606842041] [Elapsed time: 7367.82s]

[Epoch 153/200] [D loss: 0.033678] [G pixel loss: 0.177233, adv loss: 0.9871024489402771] [Elapsed time: 7415.73s]

[Epoch 154/200] [D loss: 0.031658] [G pixel loss: 0.170103, adv loss: 1.1817864179611206] [Elapsed time: 7463.68s]

[Epoch 155/200] [D loss: 0.028353] [G pixel loss: 0.177376, adv loss: 0.7463202476501465] [Elapsed time: 7511.53s]

[Epoch 156/200] [D loss: 0.025022] [G pixel loss: 0.179925, adv loss: 0.9695056676864624] [Elapsed time: 7560.43s]

[Epoch 157/200] [D loss: 0.032364] [G pixel loss: 0.146468, adv loss: 0.789677619934082] [Elapsed time: 7608.50s]

[Epoch 158/200] [D loss: 0.028525] [G pixel loss: 0.179535, adv loss: 0.7461600303649902] [Elapsed time: 7656.57s]

[Epoch 159/200] [D loss: 0.028537] [G pixel loss: 0.180690, adv loss: 1.1370570659637451] [Elapsed time: 7704.46s]

[Epoch 160/200] [D loss: 0.014208] [G pixel loss: 0.171034, adv loss: 1.1104817390441895] [Elapsed time: 7753.40s]

[Epoch 161/200] [D loss: 0.036036] [G pixel loss: 0.180282, adv loss: 0.668064296245575] [Elapsed time: 7801.32s]

[Epoch 162/200] [D loss: 0.017487] [G pixel loss: 0.151971, adv loss: 0.9679929614067078] [Elapsed time: 7849.27s]

[Epoch 163/200] [D loss: 0.017655] [G pixel loss: 0.164969, adv loss: 0.8295966982841492] [Elapsed time: 7897.32s]

[Epoch 164/200] [D loss: 0.015272] [G pixel loss: 0.177389, adv loss: 0.9722349047660828] [Elapsed time: 7946.07s]

[Epoch 165/200] [D loss: 0.027824] [G pixel loss: 0.170566, adv loss: 1.2339824438095093] [Elapsed time: 7994.11s]

[Epoch 166/200] [D loss: 0.027167] [G pixel loss: 0.174402, adv loss: 0.7651223540306091] [Elapsed time: 8042.09s]

[Epoch 167/200] [D loss: 0.016944] [G pixel loss: 0.175642, adv loss: 0.8624167442321777] [Elapsed time: 8089.94s]

[Epoch 168/200] [D loss: 0.011771] [G pixel loss: 0.175168, adv loss: 1.0963164567947388] [Elapsed time: 8138.83s]

[Epoch 169/200] [D loss: 0.015328] [G pixel loss: 0.178143, adv loss: 0.9898573756217957] [Elapsed time: 8186.68s]

[Epoch 170/200] [D loss: 0.040983] [G pixel loss: 0.192682, adv loss: 0.674818754196167] [Elapsed time: 8234.59s]

[Epoch 171/200] [D loss: 0.015219] [G pixel loss: 0.185358, adv loss: 1.1193597316741943] [Elapsed time: 8282.56s]

[Epoch 172/200] [D loss: 0.018121] [G pixel loss: 0.174457, adv loss: 0.8547428846359253] [Elapsed time: 8331.55s]

[Epoch 173/200] [D loss: 0.030641] [G pixel loss: 0.186524, adv loss: 0.8115360736846924] [Elapsed time: 8379.49s]

[Epoch 174/200] [D loss: 0.016824] [G pixel loss: 0.171252, adv loss: 0.894091784954071] [Elapsed time: 8427.49s]

[Epoch 175/200] [D loss: 0.016882] [G pixel loss: 0.170456, adv loss: 0.8616832494735718] [Elapsed time: 8475.51s]

[Epoch 176/200] [D loss: 0.009144] [G pixel loss: 0.157934, adv loss: 0.9213641881942749] [Elapsed time: 8524.40s]

[Epoch 177/200] [D loss: 0.009652] [G pixel loss: 0.169221, adv loss: 0.8785173296928406] [Elapsed time: 8572.41s]

[Epoch 178/200] [D loss: 0.012084] [G pixel loss: 0.175504, adv loss: 1.0288289785385132] [Elapsed time: 8620.37s]

[Epoch 179/200] [D loss: 0.008100] [G pixel loss: 0.192016, adv loss: 1.081184983253479] [Elapsed time: 8668.26s]

[Epoch 180/200] [D loss: 0.018144] [G pixel loss: 0.165491, adv loss: 1.215390920639038] [Elapsed time: 8717.04s]

[Epoch 181/200] [D loss: 0.013946] [G pixel loss: 0.154779, adv loss: 1.1482352018356323] [Elapsed time: 8765.02s]

[Epoch 182/200] [D loss: 0.015590] [G pixel loss: 0.180115, adv loss: 0.9094292521476746] [Elapsed time: 8812.84s]

[Epoch 183/200] [D loss: 0.070801] [G pixel loss: 0.155887, adv loss: 0.5568445920944214] [Elapsed time: 8860.85s]

[Epoch 184/200] [D loss: 0.018930] [G pixel loss: 0.162062, adv loss: 0.8197201490402222] [Elapsed time: 8909.80s]

[Epoch 185/200] [D loss: 0.048733] [G pixel loss: 0.164347, adv loss: 0.704258143901825] [Elapsed time: 8957.57s]

[Epoch 186/200] [D loss: 0.014725] [G pixel loss: 0.170123, adv loss: 1.0082449913024902] [Elapsed time: 9005.52s]

[Epoch 187/200] [D loss: 0.010266] [G pixel loss: 0.180229, adv loss: 0.9917802810668945] [Elapsed time: 9053.48s]

[Epoch 188/200] [D loss: 0.012535] [G pixel loss: 0.163434, adv loss: 0.8837308883666992] [Elapsed time: 9102.34s]

[Epoch 189/200] [D loss: 0.022808] [G pixel loss: 0.166665, adv loss: 0.7575783729553223] [Elapsed time: 9150.28s]

[Epoch 190/200] [D loss: 0.010755] [G pixel loss: 0.179229, adv loss: 0.8983967304229736] [Elapsed time: 9198.24s]

[Epoch 191/200] [D loss: 0.010936] [G pixel loss: 0.165294, adv loss: 0.9712036848068237] [Elapsed time: 9246.23s]

[Epoch 192/200] [D loss: 0.007615] [G pixel loss: 0.156649, adv loss: 0.9319448471069336] [Elapsed time: 9295.10s]

[Epoch 193/200] [D loss: 0.008265] [G pixel loss: 0.145452, adv loss: 0.9319097399711609] [Elapsed time: 9343.13s]

[Epoch 194/200] [D loss: 0.013695] [G pixel loss: 0.183919, adv loss: 0.8213084936141968] [Elapsed time: 9390.98s]

[Epoch 195/200] [D loss: 0.010004] [G pixel loss: 0.146489, adv loss: 0.9926756620407104] [Elapsed time: 9439.01s]

[Epoch 196/200] [D loss: 0.014501] [G pixel loss: 0.154261, adv loss: 1.155073642730713] [Elapsed time: 9487.77s]

[Epoch 197/200] [D loss: 0.012006] [G pixel loss: 0.184645, adv loss: 0.8909494876861572] [Elapsed time: 9535.80s]

[Epoch 198/200] [D loss: 0.004491] [G pixel loss: 0.170718, adv loss: 1.0182318687438965] [Elapsed time: 9583.65s]

[Epoch 199/200] [D loss: 0.008388] [G pixel loss: 0.154336, adv loss: 0.9526118636131287] [Elapsed time: 9631.55s]

- 생성된 이미지 예시를 출력한다.

from IPython.display import Image

Image('1200.png')

.png)

Image('10000.png')

.png)

Image('2400.png')

.png)

학습된 모델 파라미터 저장 및 테스트

- 다음의 코드를 이용하여 학습된 모델 파라미터를 다운로드 받을 수 있다

# 모델 파라미터 저장

torch.save(generator.state_dict(), "Pix2Pix_Generator_for_Facades.pt")

torch.save(discriminator.state_dict(), "Pix2Pix_Discriminator_for_Facades.pt")

print("Model saved!")

Model saved!

# 모델 파라미터 다운로드

from google.colab import files

files.download('Pix2Pix_Generator_for_Facades.pt')

files.download('Pix2Pix_Discriminator_for_Facades.pt')

<IPython.core.display.Javascript object>

<IPython.core.display.Javascript object>

<IPython.core.display.Javascript object>

<IPython.core.display.Javascript object>

- 학습된 모델을 불러와 테스트 가능

!wget https://postechackr-my.sharepoint.com/:u:/g/personal/dongbinna_postech_ac_kr/EQPOZG2WXrlHirX5l8SHM9MBVcE0pLoIAdYwd3-hWXm73Q?download=1 -O Pix2Pix_Generator_for_Facades.pt

!wget https://postechackr-my.sharepoint.com/:u:/g/personal/dongbinna_postech_ac_kr/EYlJJGhQ5OFKp14jS_zGy8kBekJSkIe3ioN8LFIk59oa6A?download=1 -O Pix2Pix_Discriminator_for_Facades.pt

--2022-03-21 15:32:15-- https://postechackr-my.sharepoint.com/:u:/g/personal/dongbinna_postech_ac_kr/EQPOZG2WXrlHirX5l8SHM9MBVcE0pLoIAdYwd3-hWXm73Q?download=1

Resolving postechackr-my.sharepoint.com (postechackr-my.sharepoint.com)... 13.107.136.9, 13.107.138.9

Connecting to postechackr-my.sharepoint.com (postechackr-my.sharepoint.com)|13.107.136.9|:443... connected.

HTTP request sent, awaiting response... 302 Found

Location: /personal/dongbinna_postech_ac_kr/Documents/Research/models/Pix2Pix/Pix2Pix_Generator_for_Facades.pt [following]

--2022-03-21 15:32:16-- https://postechackr-my.sharepoint.com/personal/dongbinna_postech_ac_kr/Documents/Research/models/Pix2Pix/Pix2Pix_Generator_for_Facades.pt

Reusing existing connection to postechackr-my.sharepoint.com:443.

HTTP request sent, awaiting response... 200 OK

Length: 217624563 (208M) [application/octet-stream]

Saving to: ‘Pix2Pix_Generator_for_Facades.pt’

Pix2Pix_Generator_f 100%[===================>] 207.54M 53.3MB/s in 7.0s

2022-03-21 15:32:24 (29.8 MB/s) - ‘Pix2Pix_Generator_for_Facades.pt’ saved [217624563/217624563]

--2022-03-21 15:32:24-- https://postechackr-my.sharepoint.com/:u:/g/personal/dongbinna_postech_ac_kr/EYlJJGhQ5OFKp14jS_zGy8kBekJSkIe3ioN8LFIk59oa6A?download=1

Resolving postechackr-my.sharepoint.com (postechackr-my.sharepoint.com)... 13.107.136.9, 13.107.138.9

Connecting to postechackr-my.sharepoint.com (postechackr-my.sharepoint.com)|13.107.136.9|:443... connected.

HTTP request sent, awaiting response... 302 Found

Location: /personal/dongbinna_postech_ac_kr/Documents/Research/models/Pix2Pix/Pix2Pix_Discriminator_for_Facades.pt [following]

--2022-03-21 15:32:25-- https://postechackr-my.sharepoint.com/personal/dongbinna_postech_ac_kr/Documents/Research/models/Pix2Pix/Pix2Pix_Discriminator_for_Facades.pt

Reusing existing connection to postechackr-my.sharepoint.com:443.

HTTP request sent, awaiting response... 200 OK

Length: 11074771 (11M) [application/octet-stream]

Saving to: ‘Pix2Pix_Discriminator_for_Facades.pt’

Pix2Pix_Discriminat 100%[===================>] 10.56M 3.29MB/s in 3.2s

2022-03-21 15:32:29 (3.29 MB/s) - ‘Pix2Pix_Discriminator_for_Facades.pt’ saved [11074771/11074771]

# 생성자(generator)와 판별자(discriminator) 초기화

generator = GeneratorUNet()

discriminator = Discriminator()

generator.cuda()

discriminator.cuda()

generator.load_state_dict(torch.load("Pix2Pix_Generator_for_Facades.pt"))

discriminator.load_state_dict(torch.load("Pix2Pix_Discriminator_for_Facades.pt"))

generator.eval();

discriminator.eval();

from PIL import Image

imgs = next(iter(val_dataloader)) # 10개의 이미지를 추출해 생성

real_A = imgs["B"].cuda()

real_B = imgs["A"].cuda()

fake_B = generator(real_A)

# real_A: 조건(condition), fake_B: 변환된 이미지(translated image), real_B: 정답 이미지

img_sample = torch.cat((real_A.data, fake_B.data, real_B.data), -2) # 높이(height)를 기준으로 이미지를 연결하기

save_image(img_sample, f"result.png", nrow=5, normalize=True)

/usr/local/lib/python3.7/dist-packages/torch/utils/data/dataloader.py:481: UserWarning: This DataLoader will create 4 worker processes in total. Our suggested max number of worker in current system is 2, which is smaller than what this DataLoader is going to create. Please be aware that excessive worker creation might get DataLoader running slow or even freeze, lower the worker number to avoid potential slowness/freeze if necessary.

cpuset_checked))

from IPython.display import Image

Image('result.png')

.png)